Just Ruky

The Null Island

Starting GoGrubIt. The place to find dietary advice you can trust.

Sunday, December 29, 2019

One of my 2020 goals is to promote healthy living. Because non-communicable diseases are on the rise at an alarming rate.

The main reason for this is that most people are not following an unhealthy lifestyle.

With time our diet and lifestyle changed. We moved from home-cooked healthy meals to fast food.

We became couch potatoes and we stopped exercising. With time we end up developing diabetes, hypertension and fatty liver and so many other non-communicable diseases.

Did you know that most of these diseases can be prevented if we make a small change, that is by changing our dietary patterns and our lifestyle?

To make matters worse, food bloggers and websites are also promoting places of fast food and unhealthy eating. And there are some unhealthy food myths that is also not helping the cause at all.

To solve all these, and for people to get an idea about healthy living in plain and simple terms I decided to start the GrubIt blog.

Grub it hopes to be the go-to place for trusted dietary advice. I will be talking with doctors, nutritionists in the field. Also digging through the latest research to find the verified and up to date information for you.

So let's make 2020 a healthier new year for everyone and let's follow the good dietary food habits and build a healthy community.

Follow and share the blog with your friends. Visit https://gogrubit.com for the latest dietary advice that you can trust.

Friday, August 5, 2016

Twitter has become a hostile place, not that it hasn't been hostile in the past, just that it is getting from bad to worse.

Even if I Tweet this blog post there won't be long before someone will say I'm an oldfag complaining about the newfags.

Twitter used to have an engaging community, and now publishing a tweet is like screaming out in an empty place, no one will hear you, no one will see you, no one will notice what you will say. There is very minimum interaction happening right now on Twitter.

I can't understand the reason, maybe it is because people are more engaged with local and global celebrities, or 'social celebrities', I don't know. Even with an algorithm in place if you post something on Facebook you will get at least some interaction from the community, but on Twitter now it's basically none, at least for me.

So I think I have come to a point where there is no reward or value in return after posting a Tweet. The main reason why people like social media is the reward that one get from that social platform, on Facebook it maybe the likes or the comments. On Snapchat the number of people who saw your story, on Reddit the upvotes and comments.

Although it's nothing big, that self satisfaction is the reason why people keep coming back and keep using a social platform. That satisfaction is the reward that people get in return for using that social platform.

And when you don't get that it's very hard to keep using that social platform. Without that value it becomes a burden, and the effort being put on making a tweet becomes a sour experience.

I am not sure the experience is the same for you, but this is what I feel and I have come to a point where I am considering stop using Twitter, however I am split on this because although the community has become hostile I have made lot of good friends and had got lot of opportunities because of Twitter. Which I will lose if I leave Twitter, but if things don't change on Twitter there will be a time where the scale will be more towards leaving Twitter than staying there.

It's no wonder that people are using Twitter less and less and they are having problems in getting new users on board. If Twitter doesn't change their user experience I am sure more people will leave Twitter or stop using it altogether.

Even if I Tweet this blog post there won't be long before someone will say I'm an oldfag complaining about the newfags.

Twitter used to have an engaging community, and now publishing a tweet is like screaming out in an empty place, no one will hear you, no one will see you, no one will notice what you will say. There is very minimum interaction happening right now on Twitter.

I can't understand the reason, maybe it is because people are more engaged with local and global celebrities, or 'social celebrities', I don't know. Even with an algorithm in place if you post something on Facebook you will get at least some interaction from the community, but on Twitter now it's basically none, at least for me.

So I think I have come to a point where there is no reward or value in return after posting a Tweet. The main reason why people like social media is the reward that one get from that social platform, on Facebook it maybe the likes or the comments. On Snapchat the number of people who saw your story, on Reddit the upvotes and comments.

Although it's nothing big, that self satisfaction is the reason why people keep coming back and keep using a social platform. That satisfaction is the reward that people get in return for using that social platform.

And when you don't get that it's very hard to keep using that social platform. Without that value it becomes a burden, and the effort being put on making a tweet becomes a sour experience.

I am not sure the experience is the same for you, but this is what I feel and I have come to a point where I am considering stop using Twitter, however I am split on this because although the community has become hostile I have made lot of good friends and had got lot of opportunities because of Twitter. Which I will lose if I leave Twitter, but if things don't change on Twitter there will be a time where the scale will be more towards leaving Twitter than staying there.

It's no wonder that people are using Twitter less and less and they are having problems in getting new users on board. If Twitter doesn't change their user experience I am sure more people will leave Twitter or stop using it altogether.

Tuesday, August 2, 2016

Genetic algorithms is another model of machine learning that has been inspired by nature. Although it is not that much used in modern days because much has been overshadowed by deep learning and neural networks.

In nature and in theory of evolution there is only the survival of the fittest, where only the fittest genes are brought forwards in generations and crossing over of these genes in reproduction and mutations make the subsequent generations stronger and the weaker genes die off.

The same is applied in a genetic algorithm where they are used to solve for np hard problems. However from what I understood in a genetic algorithm you need to give it a target to achieve, and unlike a neural network it can't predict and it is not good for pattern recognition.

But like I said it's very good at solving np hard problems, for example lets say

$$x + y + z + a + b = 40 $$

and you need to find the values for $x,y,x,a,b$ you can implement a genetic algorithm for this purpose. And not only this simple equation you use it for any complex equation.

In every genetic algorithm there are few basic steps,

First you start with a population of a given number of chromosomes, in our example each chromosome will have four values which are generated randomly, which represent $x,y,x,a,b$ respectively.

So a single chromosome can be like this, we can also represent them in binary form,

And then we evaluated the fitness of each chromosome by using a fitness function/cost function, which gives us a value in how far each chromosome is from the target, in a genetic algorithm it is the most important part is coming up with the best fitness function. So the closer the chromosome is to the target the fitter the chromosome is and fitness gets reduced as a chromosome gets further away from the target.

In this example we add up the four values in a chromosome and deduct it from the target to see how far it is from the target.

Just like that calculate the fitness of all the chromosomes and then we select the fittest chromosome from them (the ones that are closest to the target).

In selecting the fittest chromosomes we commonly use a roulette wheel algorithm to pick the ones with the highest probability.

Then just like what happens in real DNA we splice two fit chromosomes, we splice the arrays at given points. And we join them to form a new chromosome.

So you can see the child chromosome is fitter and more closer to the target than its parent chromosomes.

Just like that a selected chromosomes are crossed and child generation is formed, and the weaker chromosomes are removed from the population.

Also we can add some mutations to the chromosomes, adding random values in between them, we can set a given value to the number of mutations. Like 0.3 percent of chromosomes should be mutated.

And this cycle repeated until the target is achieved or a given number of generations are spawned.

It's pretty basic and very simple to implement. But there are some limitations,

I used the following research paper in implementing this, https://arxiv.org/pdf/1308.467

The following genetic algorithm was used for matching attacks on clash of clans game, where the attackers strengths were given a value and the defenders defense was given a value. And the algorithm was used to decide who should attack who.

I will not go into detail about the Clash of Clans problem because it is still an undergoing interesting research for a later time.

However this algorithm is not complete, because it tends to give duplicate attacks, and also matches some absurd attacks that are not practical. Just like in our above example the final answer can be

This answer is also correct but it is not the answer that we are looking for, because the genetic algorithm doesn't have a common sense to whether the answer is practical or not, it is only interesting in achieving the target target no matter what.

The code for the genetic algorithm is available on GitHub : https://gist.github.com/rukshn/f5cc571aeb2ca149d472b7701bf75734

In nature and in theory of evolution there is only the survival of the fittest, where only the fittest genes are brought forwards in generations and crossing over of these genes in reproduction and mutations make the subsequent generations stronger and the weaker genes die off.

The same is applied in a genetic algorithm where they are used to solve for np hard problems. However from what I understood in a genetic algorithm you need to give it a target to achieve, and unlike a neural network it can't predict and it is not good for pattern recognition.

But like I said it's very good at solving np hard problems, for example lets say

$$x + y + z + a + b = 40 $$

and you need to find the values for $x,y,x,a,b$ you can implement a genetic algorithm for this purpose. And not only this simple equation you use it for any complex equation.

In every genetic algorithm there are few basic steps,

First you start with a population of a given number of chromosomes, in our example each chromosome will have four values which are generated randomly, which represent $x,y,x,a,b$ respectively.

So a single chromosome can be like this, we can also represent them in binary form,

chromosome1 = [5,12,2,1]

And then we evaluated the fitness of each chromosome by using a fitness function/cost function, which gives us a value in how far each chromosome is from the target, in a genetic algorithm it is the most important part is coming up with the best fitness function. So the closer the chromosome is to the target the fitter the chromosome is and fitness gets reduced as a chromosome gets further away from the target.

In this example we add up the four values in a chromosome and deduct it from the target to see how far it is from the target.

chromosome1 = [5,12,2,1]

5+12+2+1 = 20

40-20 = 20

Just like that calculate the fitness of all the chromosomes and then we select the fittest chromosome from them (the ones that are closest to the target).

In selecting the fittest chromosomes we commonly use a roulette wheel algorithm to pick the ones with the highest probability.

Then just like what happens in real DNA we splice two fit chromosomes, we splice the arrays at given points. And we join them to form a new chromosome.

chromosome1 = [5,12,2,1] = 20

chromosome2 = [8,7,3,1] = 18

chromosome1 >< chromosome2

[5,12] ><[8,7]

New chromosome = [5,12,8,7] = 32 So you can see the child chromosome is fitter and more closer to the target than its parent chromosomes.

Just like that a selected chromosomes are crossed and child generation is formed, and the weaker chromosomes are removed from the population.

Also we can add some mutations to the chromosomes, adding random values in between them, we can set a given value to the number of mutations. Like 0.3 percent of chromosomes should be mutated.

And this cycle repeated until the target is achieved or a given number of generations are spawned.

It's pretty basic and very simple to implement. But there are some limitations,

- There should be a target, or result. Because we are calculating the fitness of the population chromosomes it's difficult to implement one without knowing the end result which means it is not good on predicting or recognizing patterns unless you use it together with a neural network.

- The genetic algorithms are very inefficient, as the number of chromosomes increase the time it is taken for come to a result also increase, imagine having 10,000 chromosomes and going through 10,000 of them mutating, crossing over and assessing fitness and repeating the cycle for anther 1000 generations takes a lot of time.

I used the following research paper in implementing this, https://arxiv.org/pdf/1308.467

The following genetic algorithm was used for matching attacks on clash of clans game, where the attackers strengths were given a value and the defenders defense was given a value. And the algorithm was used to decide who should attack who.

I will not go into detail about the Clash of Clans problem because it is still an undergoing interesting research for a later time.

However this algorithm is not complete, because it tends to give duplicate attacks, and also matches some absurd attacks that are not practical. Just like in our above example the final answer can be

answer = [40,0,0,0] = 40

x = 40

y = 0

z = 0

a = 0

b = 0This answer is also correct but it is not the answer that we are looking for, because the genetic algorithm doesn't have a common sense to whether the answer is practical or not, it is only interesting in achieving the target target no matter what.

The code for the genetic algorithm is available on GitHub : https://gist.github.com/rukshn/f5cc571aeb2ca149d472b7701bf75734

Friday, July 29, 2016

Yahoo came to a slow painful death few days back when majority of it was bought by Verizon for a lesser price than what Yahoo paid to by broadcast.com.

Like I said in a previous post Yahoo's problem was that it failed to innovate, failed to get along with the new trends in the tech arena unlike Facebook and Google who fiercely adopt to changes rather than just sticking in to what they are good at.

However when it comes to Twitter the once and still beloved micro-blogging platform ten years from now are we going to talk about Twitter the same way as we do today or are we going to talk about Twitter as same as we are talking about Yahoo?

Looking at Twitter it has lot of similarities to Yahoo. Yahoo did what they were good at and stuck with it, same can be said about Twitter. Over the past two to three years how much has Twitter changed? How much value has it added to their beloved users?

I see very little change from Twitter two years back and today, they added very little functionality and value to it's user experience. While the new kid on the block Snapchat has made inroads with their product. Snapchat has added stories, the discovery option where publishers can publish good content, recently they added memories. There is lot happening at Snapchat, what has happened on Twitter? Has it added any value to the users or advertisers?

Yes Twitter has added cards, and now every publication is using it, what good does it do? Where you have to scroll a long way down to see the same number of Tweets that we used to see without cards.

Also like Yahoo Twitter also made acquisitions like Vine and Periscope, which has yet to make a dent in the universe like they were supposed to do. And also Twitter has failed to address the harassment and bad experiences users face.

And all this has come down to less monthly active users, stunted growth, no clear path in making money.

There is lot at stake for Twitter and lot in common with Yahoo, it's up to them to change or like Google beat Yahoo it won't be long for Snapchat to replace Twitter.

Like I said in a previous post Yahoo's problem was that it failed to innovate, failed to get along with the new trends in the tech arena unlike Facebook and Google who fiercely adopt to changes rather than just sticking in to what they are good at.

However when it comes to Twitter the once and still beloved micro-blogging platform ten years from now are we going to talk about Twitter the same way as we do today or are we going to talk about Twitter as same as we are talking about Yahoo?

Looking at Twitter it has lot of similarities to Yahoo. Yahoo did what they were good at and stuck with it, same can be said about Twitter. Over the past two to three years how much has Twitter changed? How much value has it added to their beloved users?

I see very little change from Twitter two years back and today, they added very little functionality and value to it's user experience. While the new kid on the block Snapchat has made inroads with their product. Snapchat has added stories, the discovery option where publishers can publish good content, recently they added memories. There is lot happening at Snapchat, what has happened on Twitter? Has it added any value to the users or advertisers?

Yes Twitter has added cards, and now every publication is using it, what good does it do? Where you have to scroll a long way down to see the same number of Tweets that we used to see without cards.

Also like Yahoo Twitter also made acquisitions like Vine and Periscope, which has yet to make a dent in the universe like they were supposed to do. And also Twitter has failed to address the harassment and bad experiences users face.

And all this has come down to less monthly active users, stunted growth, no clear path in making money.

There is lot at stake for Twitter and lot in common with Yahoo, it's up to them to change or like Google beat Yahoo it won't be long for Snapchat to replace Twitter.

Tuesday, July 26, 2016

Who would have thought back then Yahoo will meet the sad end that they came across to they, being bought by Verizon for 4 billion dollars. They spent more money than that for their acquisitions, that they failed spectacularly.

Yahoo is a good example and teaches a lot for every company, no matter how strong you are, no matter how powerful you are, if you don't adapt, if you don't evolve you will be beaten by someone coming behind you. Even you are at the front of the race it's always good to look back where your competitors are.

Lack of innovation, missed opportunities, poor decision making, one can write a whole thesis about yahoo's failure.

Back in 1998 Google offered Yahoo their search platform, they didn't ask for much, just one million dollars. Yahoo rejected the offer, today Google is worth 500 billion dollars, and Yahoo was bought for hundred times less.

Yahoo didn't see or didn't want to adopt to the changing technological environment. They bought companies and screwed them up, I think Yahoo lacked a vision in what they want to be, a search engine? email platform? or a photo sharing service? They didn't have any idea, also they never had any idea in using big data or machine learning back in the day when others started using it.

That's my two cents on that, when I told this about to my mom her response was

Yahoo is a good example and teaches a lot for every company, no matter how strong you are, no matter how powerful you are, if you don't adapt, if you don't evolve you will be beaten by someone coming behind you. Even you are at the front of the race it's always good to look back where your competitors are.

Lack of innovation, missed opportunities, poor decision making, one can write a whole thesis about yahoo's failure.

Back in 1998 Google offered Yahoo their search platform, they didn't ask for much, just one million dollars. Yahoo rejected the offer, today Google is worth 500 billion dollars, and Yahoo was bought for hundred times less.

Yahoo didn't see or didn't want to adopt to the changing technological environment. They bought companies and screwed them up, I think Yahoo lacked a vision in what they want to be, a search engine? email platform? or a photo sharing service? They didn't have any idea, also they never had any idea in using big data or machine learning back in the day when others started using it.

That's my two cents on that, when I told this about to my mom her response was

If Yahoo bought Google back in the day maybe we won't even have a thing called Google

Monday, July 25, 2016

In my second post I wrote about perceptron and making a perceptron. And there I said that you can't make a XOR gate by using a perceptron, because a single perceptron can only do linearly separable problems. And XOR is not a linearly separable problem, so I thought of writing this post in how you can solve this.

I spent last couple of days, learning numpy and driving myself to insanity in how to make the two layer perceptron to solve the XOR gate problem. At one point I came to the verge of giving up, but the fun part of learning something by yourself not just in coding but anything is the feeling you get when you solve the problem, it's like finding a pot of gold at the end of the rainbow.

So how can you solve the XOR gate, it's by putting two input neurons, two hidden layer neurons and one output neuron all together using 5 neurons instead of just using one like we did it in the second post.

Although I call it a perceptron it's a miniature neural net itself, using backpropagation to learn. Just like what we did in the second post, we assign random values for the weights and we give the training data and the output, and we backpropagate until the error becomes minimum.

Few things that I learned the hard-way when making this was,

And because I was learning numpy at the same time, there were times I got things wrong when using the dot multiplication. There were times like I said before I didn't ran the inputs through the activation function, which gave me all sorts of bizarre charts.

So after further reading, trial and error, calculating everything by hand and after finding them and fixing them

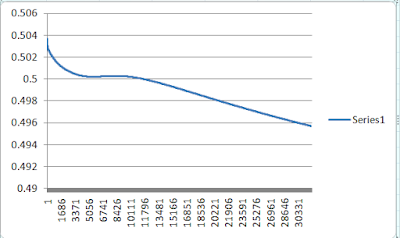

Finally saw the light at the end of the tunnel, after correcting all the error, the error came

As you can see I had to run many rounds unlike the previous single perceptron which reached to a acceptable minimum error around 10000 rounds I had to run this nearly the 13000+ rounds to get to a point where there is a drop in error (total error) also the learning rate ( $\eta$ ) was far less this time 0.25 compared to 0.5 in the previous example, that's one reason for it to take long time to get the correct weights.

Also as the starting weights are assigned randomly the learning rounds needed to reach to a reasonably low error can vary.

Although I can only plot 32000 rows in excel the error reached to 0.001009 by the time it reached 100,000 learning rounds.

And like here, there are times where it will never learn, this is because the weights are assigned randomly at the beginning. Even after 100,000 rounds the error has only reached 0.487738646. This might be due to the network has hit a local minimum and might work if we continue training it or it will just never learn.

You will never know whether a neural network will learn or not and what makes neural networks unpredictable.

I know making a XOR perceotron is no big deal as machine learning is light years ahead now. But the what matters is making something by yourself, and learning something out of it and the joy that you get at the end of reaching the destination.

I did not add any bias to this example like the previous one because I was having a tough time making this without the bias, but it's better to have a bias and will add

The code is available here - https://gist.github.com/rukshn/361a54eaec0266167051d2705ea08a5f

I spent last couple of days, learning numpy and driving myself to insanity in how to make the two layer perceptron to solve the XOR gate problem. At one point I came to the verge of giving up, but the fun part of learning something by yourself not just in coding but anything is the feeling you get when you solve the problem, it's like finding a pot of gold at the end of the rainbow.

So how can you solve the XOR gate, it's by putting two input neurons, two hidden layer neurons and one output neuron all together using 5 neurons instead of just using one like we did it in the second post.

|

| XOR perceptron structure |

Few things that I learned the hard-way when making this was,

- Every value should go through activation when they pass through a neuron, even the inputs should pass through an activation function when they pass through the $i1$ and $i2$ neurons in the diagram, it took me sometime to figure this out by myself.

- In my second post I have used the positive value at the sigmoid function not the negative value, if using the positive value at the sigmoid function then the changes are added to the existing weights, but if you are using the negative value at the sigmioid function then the weight changes are deducted, form the existing weights.

- You might have to run the learning process many rounds until you get to a point of acceptable error, because you might hit a local minimum where you can get close to the global minimum if you continue going through the training set.

- Also unlike in my second post it's better to calculate the total error, in the XOR there are four training sets, $[0,0],[0,1],[1,0],[1,1]$.

So you run through the four training sets, add the error at each training set. So you will be adding up four errors ( $\sum E = 1/2 * (target \tiny i$ $ - output \tiny i )$ ), and then save that total error to graph not plotting or taking error at each training set in to account.

And because I was learning numpy at the same time, there were times I got things wrong when using the dot multiplication. There were times like I said before I didn't ran the inputs through the activation function, which gave me all sorts of bizarre charts.

|

| One bizarre chart I got when things were not going the right direction. |

So after further reading, trial and error, calculating everything by hand and after finding them and fixing them

|

| Calculating it manually |

| ||

| Error changing (total error) per each round |

As you can see I had to run many rounds unlike the previous single perceptron which reached to a acceptable minimum error around 10000 rounds I had to run this nearly the 13000+ rounds to get to a point where there is a drop in error (total error) also the learning rate ( $\eta$ ) was far less this time 0.25 compared to 0.5 in the previous example, that's one reason for it to take long time to get the correct weights.

Also as the starting weights are assigned randomly the learning rounds needed to reach to a reasonably low error can vary.

Although I can only plot 32000 rows in excel the error reached to 0.001009 by the time it reached 100,000 learning rounds.

And like here, there are times where it will never learn, this is because the weights are assigned randomly at the beginning. Even after 100,000 rounds the error has only reached 0.487738646. This might be due to the network has hit a local minimum and might work if we continue training it or it will just never learn.

You will never know whether a neural network will learn or not and what makes neural networks unpredictable.

I know making a XOR perceotron is no big deal as machine learning is light years ahead now. But the what matters is making something by yourself, and learning something out of it and the joy that you get at the end of reaching the destination.

I did not add any bias to this example like the previous one because I was having a tough time making this without the bias, but it's better to have a bias and will add

The code is available here - https://gist.github.com/rukshn/361a54eaec0266167051d2705ea08a5f

Friday, July 22, 2016

In the last post I wrote about how a biological neuron works, and in this post I'm going to write about perceptron and how to make one.

Basically the building block of our nervous system is the neuron which is the basic functional unit, it fires if it meets the threshold, and it will not fire if it doesn't meet the threshold. A perceptron is the same, you can call it the basic functional unit of a neural network. It takes an input, do some calculations and see if the output of reaches a threshold and fires accordingly.

Perceptrons can only do basic classifications, these are called liner classifications it's like drawing a line and separating a set of data into two parts, it can't classify things the data into multiple classifications.

And you must not forget that these $x1$ and $x2$ should be numbers, because they are being multiplied by weights (numbers) so there should be a way to convert a text input to a number representations, which is a different topic.

The inputs to the perceptron are the inputs multiplied by their weights, where inside the perceptron all these weights are added together.

So adding them all together

$$\sum\ f = (x1 * w1)+(x2 * w2)$$

And this sum then passes through an activation function, there are many activation functions and the ones used commonly are the hyperbolic tangent and the sigmoid function.

In this example I am using the sigmoid function.

$$S(t) = \frac{1}{1 + e^{-t}}$$

So the output of the perceotron is what comes out through the activation function.

Like I said before the perceptrons learn by adjusting their weights until it meets a desired output. This is done by a method called backpropagation.

In simple terms backpropagation is staring from calculating the error between the target and the output, and adjusting the weights backwards from the output weights, to hidden weights, to input weights (in a multilayered neural network).

In our simple perceptron it means adjusting the $w1$ and $w2$.

The equations and more details about backpropagation can be found here - https://web.archive.org/web/20150317210621/https://www4.rgu.ac.uk/files/chapter3%20-%20bp.pdf

Let's take the AND gate first, the truth table for the AND gate is,

$$x=0 | y = 0 | output = 0$$

$$x=1 | y = 0 | output = 0$$

$$x=0 | y = 1 | output = 0$$

$$x=1 | y = 1 | output = 1$$

We are giving the the two inputs $x$ and $y$ and we are calculating the difference between the desired output and the output through our perceptron which is the error and backpropagating and adjusting the weights until the error becomes very small. This is called supervised learning.

So we are starting with two random values for the weights using Math.random() and then passing our first set of data of the truth table, then we calculate the error and backpropagate and adjust the weights just once, and then we present the second set of data and backpropagate and adjust the weights once and third set of data and the fourth set of data and so on, and the cycle is repeated.

Make sure you don't give the first set of data and adjust the weights till there is minimum error and then give the second set of data and adjust the weights till minimum error, this will not make the perceptron to learn all four patterns.

So I have repeated the cycle 10000 times and I plotted the error on a graph.

As you can see the error gets less and less and comes to a very low value, which is unnoticeable.

So now because the error is small means that the perceptron has been trained, so we an take that values of the weights, and feed the data of the truth table and see how close the perceptron's output is to the desired output.

As you can see the perceptron has come pretty close to the target of the AND gate output.

We can do the same for the OR gate,

Starting with random values for weights and backpropagating like before until we get to the point of minimum error,

And you can see that the OR gate perceptron has also come close to the desired target.

What about XOR?

$$x=0 | y = 0 | output = 0$$

$$x=1 | y = 0 | output = 1$$

$$x=0 | y = 1 | output = 1$$

$$x=1 | y = 1 | output = 0$$

You can see that the chart for the XOR is not as same as the ones we got for AND and OR gates, why is that?

So what is causing this error? Why can't the perceptron act as an XOR gate? how can we solve this? Hopefully I will answer that in a future post.

The code for the perceptron is available on here - https://gist.github.com/rukshn/d4923e23d80697d2444d077eb1673e68

In the code you will see that there is a variable called bias, bias is not a must but it is good to add a bias, in a $y = mx + c$ bias is the $c$. In a chart without the $c$ the line goes through $(0,0)$ but $c$ helps you to shift where the line, the bias is also the same. We bass bias as a fixed input to the with it's own weight which we adjust just like another weight using backpropagation.

Also the $\eta$ is called the learning factor, you can change it adjust the speed of learning, a moderate value is better.

Basically the building block of our nervous system is the neuron which is the basic functional unit, it fires if it meets the threshold, and it will not fire if it doesn't meet the threshold. A perceptron is the same, you can call it the basic functional unit of a neural network. It takes an input, do some calculations and see if the output of reaches a threshold and fires accordingly.

|

| A simple diagram of a perceptron it takes two inputs x1 and x2 do some calculations f and gives an output |

How a Perceptron Works

So the perceptron takes two inputs $x1$ and and $x2$, then they pass through the input nodes where they are multiplied by the respective weights. So the inputs $x1$ and $x2$ are like the stimulus to a neuron.And you must not forget that these $x1$ and $x2$ should be numbers, because they are being multiplied by weights (numbers) so there should be a way to convert a text input to a number representations, which is a different topic.

The inputs to the perceptron are the inputs multiplied by their weights, where inside the perceptron all these weights are added together.

So adding them all together

$$\sum\ f = (x1 * w1)+(x2 * w2)$$

And this sum then passes through an activation function, there are many activation functions and the ones used commonly are the hyperbolic tangent and the sigmoid function.

In this example I am using the sigmoid function.

$$S(t) = \frac{1}{1 + e^{-t}}$$

So the output of the perceotron is what comes out through the activation function.

How Perceotrons Learn?

Like I said before the perceptrons learn by adjusting their weights until it meets a desired output. This is done by a method called backpropagation.

In simple terms backpropagation is staring from calculating the error between the target and the output, and adjusting the weights backwards from the output weights, to hidden weights, to input weights (in a multilayered neural network).

In our simple perceptron it means adjusting the $w1$ and $w2$.

The equations and more details about backpropagation can be found here - https://web.archive.org/web/20150317210621/https://www4.rgu.ac.uk/files/chapter3%20-%20bp.pdf

Let's Make a Perceptron.

So we are going to make a perceptron, this won't do much and will only act as an 'AND' or 'OR' gate. The design of the perceptron is as same as the image above. I am using NodeJs but this can be done in a simpler way using Python and Numpy which I will hopefully set up on my computer to future use.

Let's take the AND gate first, the truth table for the AND gate is,

$$x=0 | y = 0 | output = 0$$

$$x=1 | y = 0 | output = 0$$

$$x=0 | y = 1 | output = 0$$

$$x=1 | y = 1 | output = 1$$

We are giving the the two inputs $x$ and $y$ and we are calculating the difference between the desired output and the output through our perceptron which is the error and backpropagating and adjusting the weights until the error becomes very small. This is called supervised learning.

So we are starting with two random values for the weights using Math.random() and then passing our first set of data of the truth table, then we calculate the error and backpropagate and adjust the weights just once, and then we present the second set of data and backpropagate and adjust the weights once and third set of data and the fourth set of data and so on, and the cycle is repeated.

Make sure you don't give the first set of data and adjust the weights till there is minimum error and then give the second set of data and adjust the weights till minimum error, this will not make the perceptron to learn all four patterns.

So I have repeated the cycle 10000 times and I plotted the error on a graph.

|

| Error of 'and' gate changing over each time |

So now because the error is small means that the perceptron has been trained, so we an take that values of the weights, and feed the data of the truth table and see how close the perceptron's output is to the desired output.

|

| Target and the output values of the perceotron in AND gate |

We can do the same for the OR gate,

Starting with random values for weights and backpropagating like before until we get to the point of minimum error,

|

| Error of 'or' gate changing over time |

|

| Target and output values of the perceptron in OR gate |

What about XOR?

$$x=0 | y = 0 | output = 0$$

$$x=1 | y = 0 | output = 1$$

$$x=0 | y = 1 | output = 1$$

$$x=1 | y = 1 | output = 0$$

You can see that the chart for the XOR is not as same as the ones we got for AND and OR gates, why is that?

|

| Error of 'XOR' gate changing over time |

Also we can see that the output for the XOR gate is also not correct?

|

| Target and output values of the perceptron in XOR gate |

The code for the perceptron is available on here - https://gist.github.com/rukshn/d4923e23d80697d2444d077eb1673e68

In the code you will see that there is a variable called bias, bias is not a must but it is good to add a bias, in a $y = mx + c$ bias is the $c$. In a chart without the $c$ the line goes through $(0,0)$ but $c$ helps you to shift where the line, the bias is also the same. We bass bias as a fixed input to the with it's own weight which we adjust just like another weight using backpropagation.

Also the $\eta$ is called the learning factor, you can change it adjust the speed of learning, a moderate value is better.

Subscribe to:

Posts

(

Atom

)